Recurrent Neural Networks are the most advance algorithm that exist in the world of supervised deep learning. As we know neural networks mostly resembles the inner functioning of our brain so it is easy to relate all the things accordingly.

The human brain has got 3 parts: cerebrum , cerebellum and brainstem, which connects the brain to the organs. Then, the cerebrum has 4 lobes

- Temporal Lobe: Represent long term memory and is used in Artificial Neural Networks(ANN) in which the weights and bias are to be remembered to make any predictions.

- Occipital Lobe: Represent recognition and image processing ,used in Convolution Neural Networks(CNN).

- Frontal Lobe: Represent short term memory ,used in Recurrent Neural Network(RNN).

- Parietal Lobe: Responsible for sensation, perception and constructing, could be used in future models.

What is Recurrent Neural Network(RNN)?

Recurrent nets are the type of Artificial Neural Networks(ANN) used to recognize patterns in sequences of data. These algorithms take time into account, they have a temporal dimension. They can be used in speech recognition, language modeling, translation, image captioning and stock market prediction.

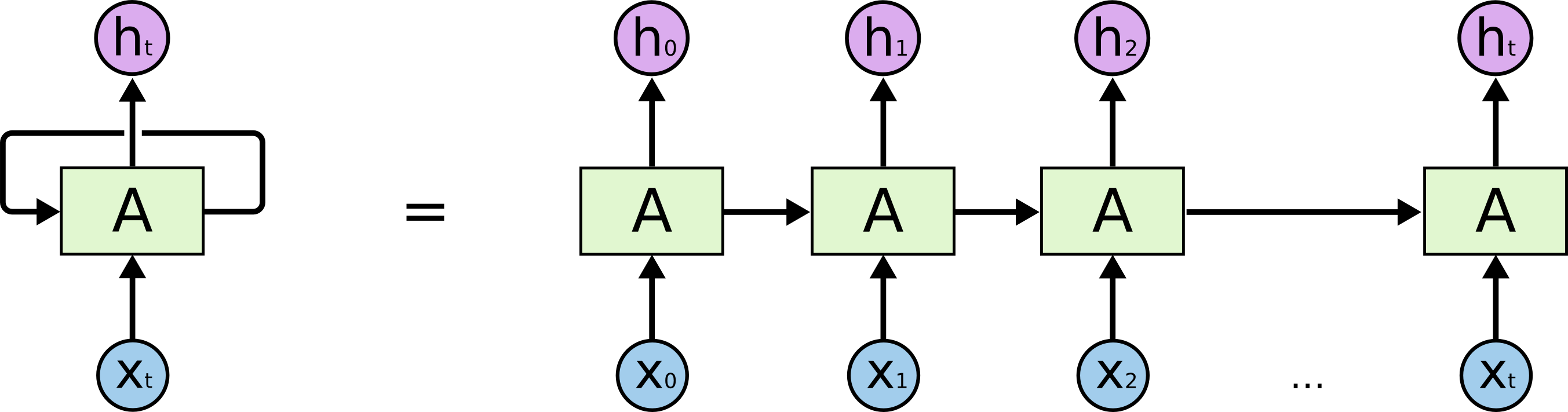

The main difference which lies between of Artificial Neural Networks (ANN) and Recurrent Neural Network(RNN) is that RNN also uses previous results in addition to current input as their whole input. The decision made by an RNN at time ‘t’ is dependent on the result obtained at ‘t-1‘ . So RNN have two inputs at a particular timestamp, the present input and the recent past and the result which processes to evaluate the correlations between the events separated by events to evaluate further results.

W: weights at time ‘t’

x_t: current input

h_t-1: results at time ‘t-1’

U: weights at time ‘t-1’

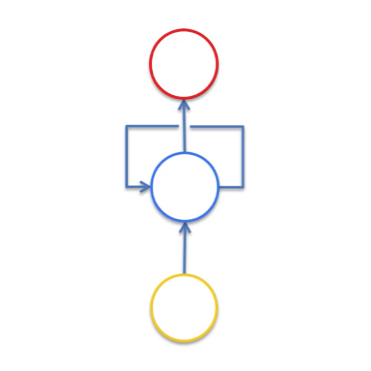

Visualization of Recurrent Neural Network(RNN)

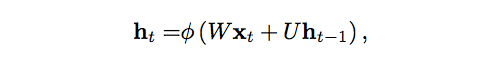

It would be easy to visualize the RNN if we try to look it transform from ANN. Let us take a simple ANN with 3 input values, 1 hidden layer with 4 nodes and 2 output values.

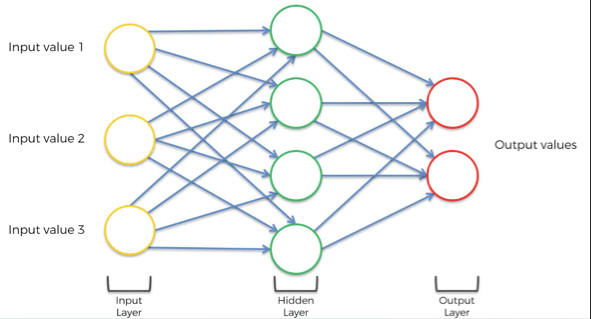

ANN can be converted to RNN by squashing the whole network.

It will be easy to visualize if we assume the ANN to be a 3-D structure with nodes to be assumed as ball and connections to be wires. Constructing fig-1 network as described and looked from top gives the view as in fig-2.

Simple representation of a network where all the respective layers are vector of values

This is an old-school representation of RNN

The neural network in fig-4 shows this hidden layer not only gives an output but also feeds back into itself.

Unrolling the network in fig-4 gives the network in fig-5. The network represents flow of data with time and what inputs and values the provides at a particular timestamp

Types of Recurrent Neural Network(RNN)

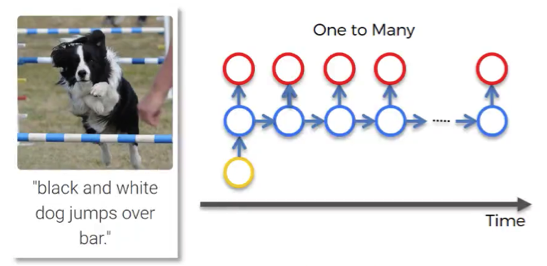

One to man: This is a network with one input and multiple outputs. This type of network can be used in image captioning. Firstly the image if fed to Convolutional Neural Network (CNN) and then to RNN to make the predictions. CNN gives the features whereas RNN make sense out the sentence predicted.

One to man

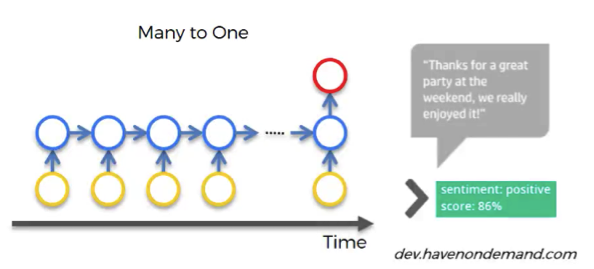

Many to one: This type of network have multiple inputs and make a single prediction. This type of network can be used in sentimental analysis.

Many to one

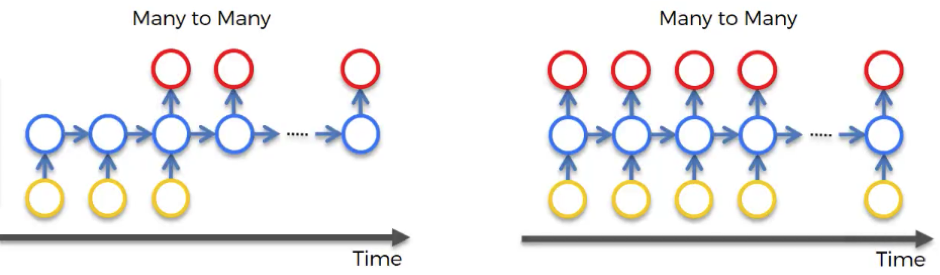

Many to many: This is a network with multiple inputs and multiple outputs. This type of network can be used to generate subtitles. That’s something you can’t do with CNN because you need context about what happened previously to understand what’s happening now, and you need this short-term memory embedded in RNN.

This was the basic intuition of RNN. Hope you found this useful in someway.